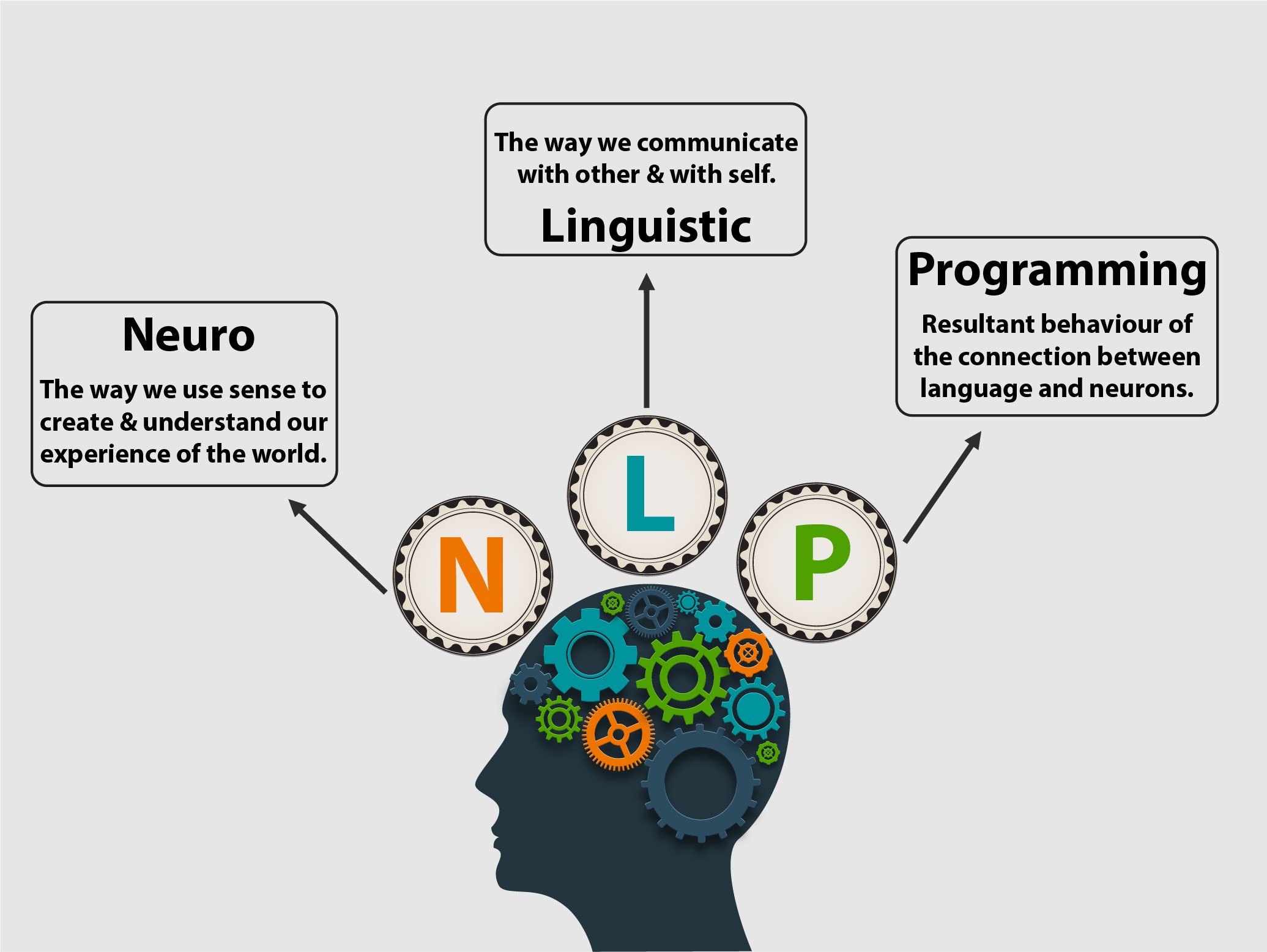

Semantic Network What Is The Partnership Between The Accuracy And The Loss In Deep Knowing? By applying this approach, Kusner et al. (2017) introduced a framework for creating counterfactual explanations by reducing the distance in an unrealized attribute space [127] Besides them, Samali et al. established an optimization method to make sure justness in approaches by developing depictions with comparable richness for various teams in the dataset [144] They represented experimental results revealing that men's faces have reduced reconstruction mistakes than women's in a photo dataset. They developed a dimensionality decrease method using an optimization feature stated in formula (2 ). These training data predispositions can convert into real-world damage, where, as an example, a regression model wrongly flagged black offenders as high danger at twice the price of white offenders ( Angwin et al., 2016). Have you expected terrific results from your device finding out version, only to get poor precision? There are lots of ways to examine your classification model, but the confusion matrix is one of the most reliable choice. It demonstrates how well your version performed and where it made mistakes, aiding you boost. Newbies frequently locate the confusion matrix confusing, but it's really simple and effective. This tutorial will discuss what a complication matrix in machine learning is and exactly how it gives a complete sight of your design's performance. Mathematically, an embedding space, or latent space, is defined as a manifold in which similar products are located closer to each other than less comparable things. In this instance, sentences that are semantically similar need to have similar embedded vectors and therefore be more detailed with each other in the area. A text embedding is a piece of text forecasted right into a high-dimensional latent space. The placement of our message in this room is a vector, a long series of numbers. Think about the two-dimensional cartesian coordinates from algebra class, however with more measurements-- commonly 768 or 1536. A sharp intuition for how a design will perform-- where it will stand out and where it will drop-- is essential for thinking through how it can be incorporated right into a successful item. When a machine finding out version counts greatly on secured features, it can bring about prejudiced forecasts that favor certain secured groups over others. For instance, a lending authorization model that counts heavily on race as an attribute may be biased against particular racial teams. It may happen if the model stops working to recognize other highly correlated attributes that are not sensitive or if the dataset lacks adequate features aside from the protected function. Consequently, the version may unjustly reject lendings to members of particular teams. We selected research study based on our search query, and our search question produced a considerable number of articles. Subsequently, new patterns of actions and interaction are created and applied in many fields consisting of the business field. Patterns encompass not only visible activities, yet also the thinking processes and the organization of individuals's states-of-mind, including their feelings and exactly how all detects are made use of to get to a point of focus or concentration. With the concentrate on creating versions of human quality, many applications of NLP have actually been developed including management associated applications. A need to check out and assess NLP in the Lebanese work environment has been raised in order to specify the workplace dynamics in between leaders and juniors as obtained from information gathered from numerous Lebanese business. This research study is exploratory, descriptive and quantitative making use of a study set of questions. Results are expected to assess the workplace setting by specifying the dynamics of the relationships between workers and supervisors that are thought to play a substantial function in the assessment of the organization's health. However, also interpretable version classes can be blatantly affected by training information problems ( Huber, 1981; Chef et al., 1982; Cook & Weisberg, 1982). Moreover, as the efficiency charge of interpretable models expands, their continued usage ends up being harder to warrant. A model with balanced bias and difference is claimed to have ideal generalization performance. Continue reading Many researchers look for a dataset devoid of detailed biases as the data and the state of the dataset's feature can be biased [54, 55, 70] They need these datasets for evaluating the fairness of RAIs or other forecasting models. Scholars have actually presented approaches to examine if a design prediction is prejudiced toward any group [110, 111] However, if we use these approaches to predictive designs with prejudiced datasets, the outcomes might not show that despite the fact that the design is fair. 4th, due to exactly how gradient-based approaches estimate influence, very prominent circumstances can actually show up uninfluential at the end of training. Unlike fixed estimators, vibrant techniques like TracIn might still be able to find these circumstances. Observe that, unlike TracIn, TracInCP appoints the same training instances the same influence price quote.

- TracIn has the flexibility to make use of only the last straight layer for scenarios where that offers adequate accuracyFootnote 23 in addition to the alternative to utilize the complete design slope when required.Cost functions are essential in machine learning, determining the variation between anticipated and real results.For the following token, t1 we take some function (specified by the weights our neural network learns) of the embeddings for t0 and t1 like f(t0, t1).I've spent the last four years structure and deploying artificial intelligence devices at AI start-ups.

Toughness Of Justness: A Speculative Evaluation

The vector for 'king', minus the vector for 'guy' and plus the vector for 'lady', is really close to the vector for 'queen'. A relatively easy version, offered a large adequate training corpus, can provide us a surprisingly abundant concealed space. The simplest method to do the encoding is construct a map from distinct input values to randomly initialized vectors, then change the worths of these vectors during training. I pointed out above that a crucial feature of an embedding area is that it preserves distance. The high-dimensional vectors made use of in message embeddings and LLMs aren't quickly user-friendly. Yet the basic spatial instinct remains (mainly) the same as we scale things down.Various Mixes Of Bias-variance

F1 is no doubt among one of the most popular metrics to evaluate model performance. The fad of continually raising version complexity and opacity will likely proceed for the foreseeable future. All at once, there are boosted social and regulatory needs for mathematical openness and explainability. Impact evaluation rests at the nexus of these contending trajectories ( Zhou et al., 2019), which points to the field growing in significance and relevance.Understanding the 3 most common loss functions for Machine Learning Regression - Towards Data Science

Understanding the 3 most common loss functions for Machine Learning Regression.

Posted: Mon, 20 May 2019 07:00:00 GMT [source]